Google has released Gemini Embedding 2, a new AI model designed to unify how machines understand and retrieve information from different media types. This isn’t just an incremental upgrade; it’s a fundamental shift in how AI processes data, potentially cutting costs and boosting speed for businesses that rely on AI-powered insights.

The Problem with Previous Embedding Models

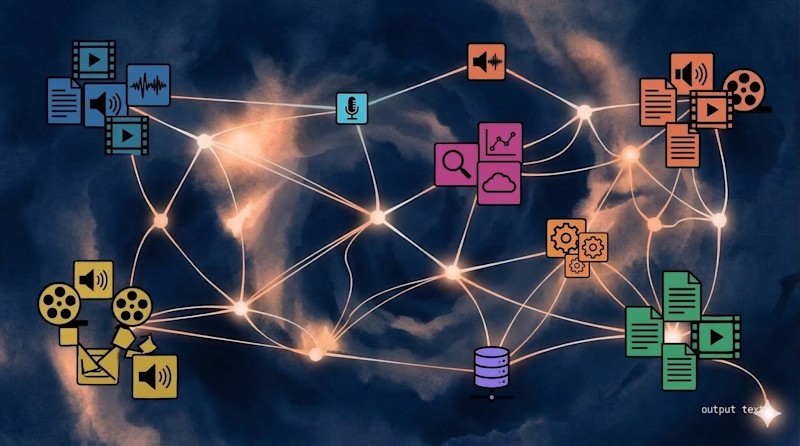

Traditional embedding models, the engines behind search, recommendations, and enterprise AI, have historically focused on text. To analyze images, videos, or audio, these models would first translate them into text, adding steps that introduced errors and slowed performance. Gemini Embedding 2 eliminates this bottleneck by natively integrating text, images, video, audio, and documents into a single mathematical space.

How Gemini Embedding 2 Works: The “Universal Library” Analogy

Think of an old-fashioned library organized by categories versus a futuristic one where books arrange themselves based on their essence. This is what an embedding model does: it converts complex data into numerical coordinates in a high-dimensional map. Similar items cluster together, regardless of format. A photo of a golden retriever and the phrase “man’s best friend” would sit side-by-side, while a sunset poem would drift towards a Pacific Coast photograph.

Gemini Embedding 2 maps all media into a unified 3,072-dimensional space, allowing developers to search across formats without separate systems for image, text, or video. This is achieved through Google’s “Matryoshka Representation Learning,” which prioritizes key information for efficiency.

Why This Matters: Efficiency and Accuracy

The shift to a natively multimodal architecture delivers tangible benefits:

- Reduced Latency : Some early testers report up to 70% faster processing times.

- Lower Costs : By eliminating intermediate “translation” steps, enterprises can save on computational resources.

- Deeper Understanding : The model understands audio as sound and video as motion directly, capturing nuances lost in text-only analysis.

Companies like Sparkonomy have already seen significant efficiency gains, while Everlaw is using the model to navigate complex legal discovery tasks.

Technical Specs: What Developers Need to Know

The model handles files with up to 8,192 text tokens, six images, 128 seconds of video, 80 seconds of audio, and six PDF pages per request. These are input limits, not storage caps – the system can handle millions of documents.

Google offers tiered pricing through the Gemini API and Vertex AI:

- Free Tier : Limited access for experimentation.

- Paid Tier : $0.25 per million tokens for text, images, and video; $0.50 per million tokens for audio.

The model is also integrated with popular AI frameworks like LangChain and LlamaIndex, simplifying adoption. The code is licensed under Apache 2.0, allowing commercial use without royalty obligations.

Should Enterprises Migrate?

For organizations relying on fragmented AI pipelines, migrating to Gemini Embedding 2 is likely a strategic necessity. The model streamlines workflows, reduces errors, and lowers costs. The transition is made easier by API continuity and integration with existing tools.

However, enterprises must manage input limits by chunking large files (splitting them into segments) before processing. The real investment lies in re-indexing existing data to fully leverage the new capabilities.

The bottom line: Gemini Embedding 2 isn’t just another AI upgrade; it’s a step toward a more unified, efficient, and accurate way of processing information in the modern enterprise.